Announced at NVIDIA GTC 2026 Keynote

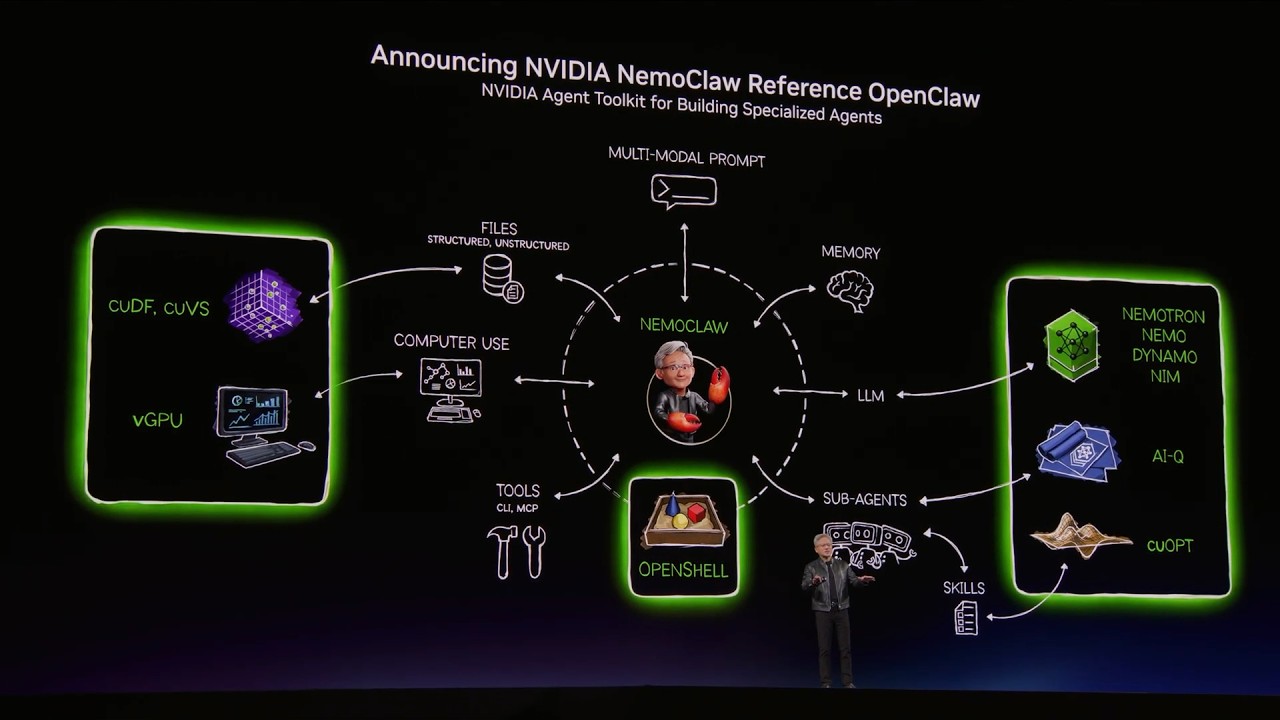

Nemo Claw was officially announced by Jensen Huang at the GTC 2026 keynote in San Jose on March 16, 2026. As reported by Wired and major NVIDIA news outlets, NVIDIA Nemo Claw represents NVIDIA's strategic move into the AI agent software ecosystem.

GTC Keynote 2026: Nemo Claw AI & Nemotron 3 Super

At the NVIDIA GTC 2026 keynote, Jensen Huang announced Nemo Claw alongside Nemotron 3 Super — a 120B-parameter open model optimized for agentic AI workflows. Nemo Claw AI was introduced as NVIDIA's answer to the enterprise security challenges plaguing OpenClaw AI.

The NVIDIA GTC keynote also showcased the new Vera CPU (Vera Rubin architecture), DLSS 5 for graphics, and the NVIDIA DGX Spark personal AI supercomputer — all part of NVIDIA's vision for AI agents everywhere. NVDA stock saw significant movement following the GTC announcements, reflecting investor confidence in NVIDIA's AI agent strategy.

Watch: NVIDIA Announces Nemo Claw at GTC 2026

See Jensen Huang introduce Nemo Claw AI at the NVIDIA GTC 2026 keynote. Learn why NVIDIA built an enterprise-grade OpenClaw alternative with OpenShell security, and how it fits into the broader NVIDIA AI agent stack alongside Nemotron 3 Super, DGX Spark, and Vera Rubin architecture.